Media regulator Ofcom is ramping up its hiring of online safety staff as debate continues over the ability of the UK to properly tackle the spread of misinformation online.

The riots in parts of the UK earlier this month were fuelled by the spread of misinformation in the wake of the stabbings in Southport.

The violence was stoked by a number of false claims spreading online, including that the suspect was an asylum seeker who had arrived in the UK by boat last year, while X owner Elon Musk made references to “two-tier policing” in Britain, reposted a false claim about the Government setting up detainment camps in the Falkland Islands, and interacted with a post from right-wing activist Tommy Robinson – real name Stephen Yaxley-Lennon – about the violence.

It has led to concerns being raised about the ability of Ofcom and new online safety laws to hold social media platforms to account.

There have been calls to strengthen the Online Safety Act, which passed last year but will not come into full effect until at least next year, while the regulator has now confirmed it is expanding its online safety team.

“We’re hiring the talent we need to achieve a safer life online,” an Ofcom spokesperson said.

“In recent years we’ve grown our expertise in technology, data and cybersecurity. We’ve hired specialists from firms such as Meta, Google and Amazon, and experts from bodies like the NSPCC.

“We’ve set up a technology lab in Manchester, using artificial intelligence to test new approaches to safety, and partnered with expert groups and counterparts in the UK and overseas to ensure our regulation is world leading.”

The regulator said its headcount for online safety as of the end of July was 466, and it projected this would grow to 557 by the end of March next year.

Sir Keir Starmer has said the Government will have to “look more broadly at social media” after the recent rioting, in an apparent hint from the Prime Minister that further regulation could be considered.

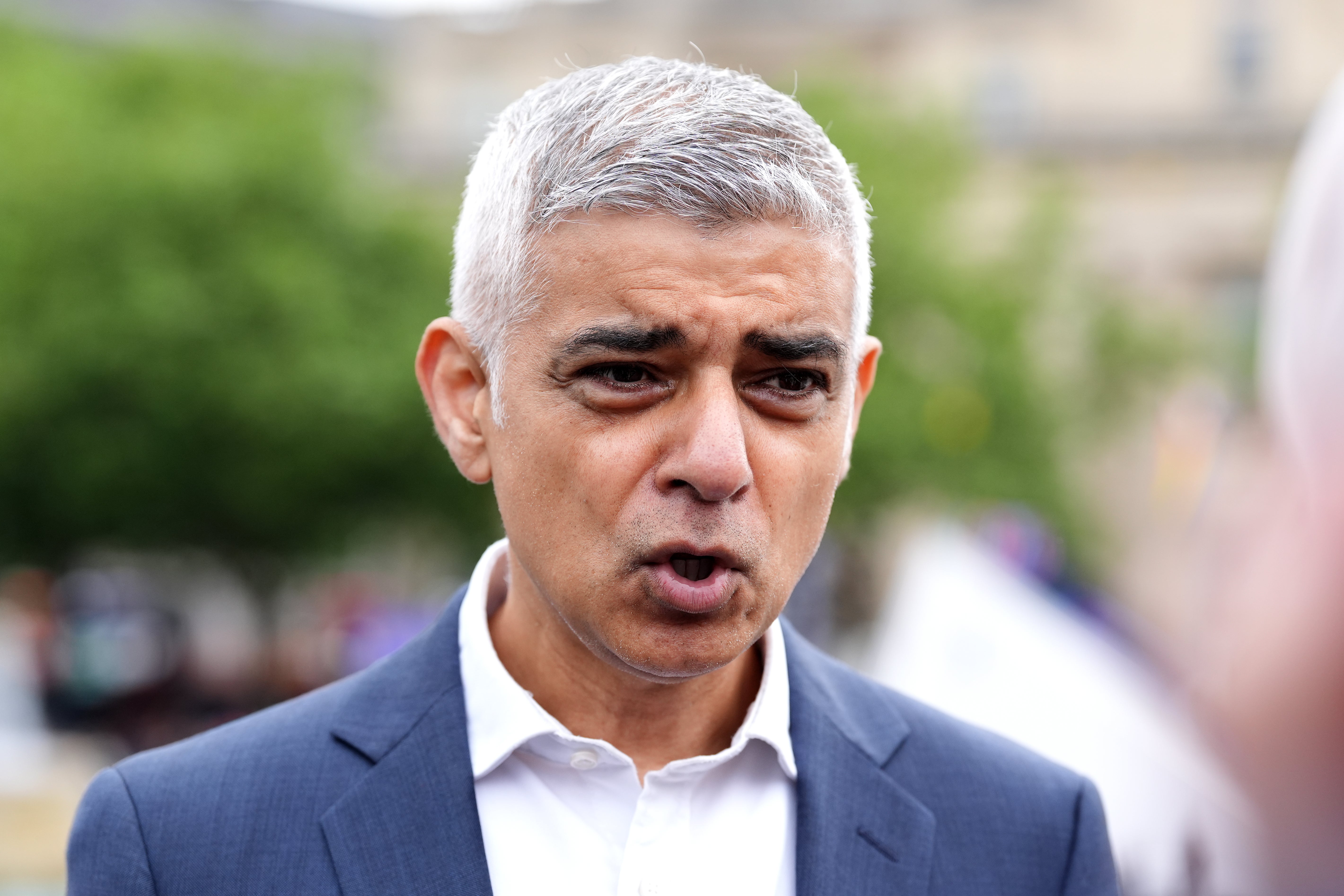

Mayor of London Sadiq Khan has also been among those calling for more action, saying the recent event showed the new rules set to be introduced under the Online Safety Act in its current form were “not fit for purpose”.

The Online Safety Act will require platforms to put in place and enforce safety measures to ensure that users, and in particular young people, do not encounter illegal or harmful content, and if they do that it is quickly removed.

Ofcom is still working on the codes of practice and parameters that will guide much of how the new rules will be enforced, but those found in breach could face fines of up to £18 million or 10% of their global revenue, whichever is greater.

In more severe cases, Ofcom will be able to seek a court order imposing business disruption measures, which could include forcing internet service providers to limit access to the platform in question.

And most strikingly, senior managers can be held criminally liable for failing to comply with Ofcom in some instances – a set of penalties it hopes will compel platforms to take greater action on harmful content.

But some have warned that these measures are not adequate enough in scope to prevent the recent disorder from happening again.

Fact-checking organisation Full Fact has previously warned that there is “no credible plan to tackle the harms from online misinformation” and says the “only references” to misinformation in the Act relate to setting up a committee to advise Ofcom about media literacy policy.

Full Fact says the Act in its current form “continues to leave the public vulnerable and exposed to online harms”.